News

SpaceX Launches Starlink Mini For $599 In Selected Regions

The compact, portable dish features a built-in Wi-Fi router and is small enough to fit inside a backpack.

SpaceX has introduced a new Starlink kit, known as the Starlink Mini, which is compact enough to fit inside a backpack. Users will now be able to carry the miniature dish anywhere and access SpaceX’s satellite internet service on the go.

According to emails sent out by SpaceX, the Starlink Mini will be priced at $599 upfront — $100 more than the standard dish kit. Users must already have a standard service plan to add the Mini Roam service, which costs an extra $30 per month. In total, Starlink residential customers will spend $150 per month if they opt for the Mini.

Starlink MINI (REV MINI1_PROD2) with built-in WiFi.

28.9×24.8 cm (11.4″ x 9.8″) pic.twitter.com/0AQKicaOk4— Oleg Kutkov 🇺🇦 (@olegkutkov) June 16, 2024

The cost of the smaller dish might decrease in the future. SpaceX mentioned in its email that it is working towards making Starlink more affordable overall. Currently, the company is offering a limited number of Mini kits “in regions with high usage”. Recently, SpaceX CEO Elon Musk discussed the Mini on X (formerly Twitter), describing it as a “great low-cost option”. He also mentioned that it would eventually cost “about half the price of the standard dish to buy and monthly subscription”.

Also Read: The Most AI-Proof Career Opportunities In The Middle East

The Starlink Mini dish includes a built-in Wi-Fi router, making it a more compact package and requiring fewer additional components than the standard version. The Mini also consumes less power, features a DC power input, and can achieve download speeds exceeding 100 Mbps. The new kit includes the dish, a kickstand, a pipe adapter, a power supply, and a cord with a USB-C connector on one end and a barrel jack on the other.

Currently, the Starlink Mini is only available in select high-usage regions. However, Michael Nicolls, Vice President of Starlink Engineering, stated on X that the company is increasing production of the Mini and plans to make it available internationally in the very near future.

News

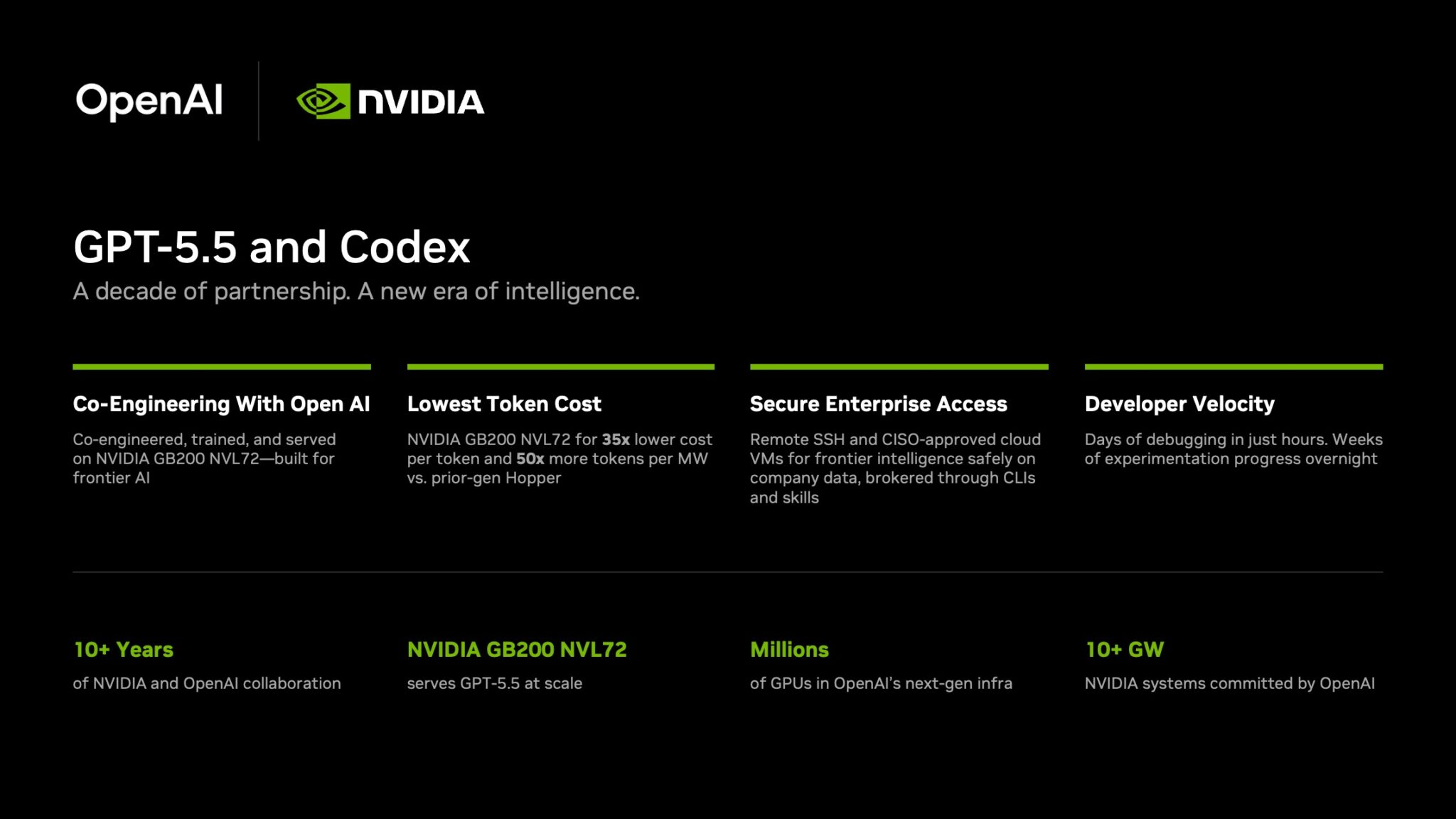

NVIDIA Puts GPT-5.5 Codex In Hands Of 10,000 Staff

The chipmaker has significantly expanded OpenAI’s latest model across teams from engineering to HR under tight internal controls.

NVIDIA has started rolling out OpenAI’s GPT-5.5 model through the Codex coding agent to more than 10,000 employees, extending the tool well beyond software teams and into core business functions.

The deployment covers engineering, product, legal, marketing, finance, sales, HR, operations and developer programs. Staff are using Codex for coding, internal research and routine knowledge work as companies test whether AI agents can move from demos to daily use.

GPT-5.5 is running on NVIDIA’s GB200 NVL72 rack-scale systems, linking OpenAI’s newest model directly to the chipmaker’s latest infrastructure push. NVIDIA said the systems cut cost per million tokens by 35 times and raise token output per second per megawatt by 50 times versus earlier generations.

Inside the company, it says the effects are immediate. Debugging work that once took days is being finished in hours and experiments across large codebases that used to stretch over weeks are now handled overnight. Teams are also building features from natural-language prompts with fewer failed runs.

In a company-wide note urging staff to adopt the tool, CEO Jensen Huang wrote: “Let’s jump to lightspeed. Welcome to the age of AI.”

Security remains central to the rollout. Codex can connect through Secure Shell to approved cloud virtual machines, allowing agents to work with company data without moving it outside approved environments. NVIDIA said it assigned cloud VMs to employees so agents run in isolated sandboxes with full audit trails.

Also Read: Deezer Says AI Tracks Now Make Up 44% Of Uploads

The company added that the setup uses a zero-data-retention policy. Access to production systems is read-only through command-line tools and internal automation layers.

The move also highlights NVIDIA’s long relationship with OpenAI. NVIDIA said the partnership began in 2016, when Huang personally delivered the first DGX-1 AI supercomputer to OpenAI’s San Francisco office.

The two companies have since worked across hardware and model deployment. NVIDIA also said OpenAI plans to deploy more than 10 gigawatts of NVIDIA systems for future AI infrastructure.

For Gulf markets pouring money into sovereign AI and enterprise automation, the signal is clear: internal AI agents are moving from pilot phase to standard tooling.