News

Truecaller To Use Microsoft Azure AI Speech For Call Answering

The new service features a powerful speech generation tool to allow users to create AI versions of their voices.

Truecaller, a well-known app for identifying and blocking spam calls, is enhancing its services by allowing users to create AI versions of their voices. The new feature, available to those with access to Truecaller’s AI Assistant, stems from a partnership with Microsoft and its Azure AI Speech tool, allowing the generation of realistic AI voices that accurately mimic users’ speech patterns and tone.

“This groundbreaking capability not only adds a touch of familiarity and comfort for the users but also showcases the power of AI in transforming the way we interact with our digital assistants,” explained Truecaller product director and general manager Raphael Mimoun in a recent blog post.

The AI Assistant in Truecaller screens incoming calls, informing recipients of a caller’s purpose. Based on this information, users can decide whether to answer the call themselves or let the AI Assistant handle it.

When the feature was introduced in 2022, users could only choose from a collection of preset voices. The ability to record one’s own voice represents a significant step towards the complete personalization of the service.

Also Read: Getting Started With Google Gemini: A Beginner’s Guide

Azure AI Speech, showcased during the last Build conference, only recently added a personal voice feature that lets people record and replicate voices. Microsoft explained in a blog post, however, that Personal Voice is available on a limited basis and only for specific use cases like voice assistants.

To maintain ethical standards, Microsoft’s Azure AI Speech automatically adds watermarks to AI-generated voices. Additionally, a code of conduct requires companies to obtain full consent from individuals being recorded and prohibits impersonation.

News

NVIDIA Puts GPT-5.5 Codex In Hands Of 10,000 Staff

The chipmaker has significantly expanded OpenAI’s latest model across teams from engineering to HR under tight internal controls.

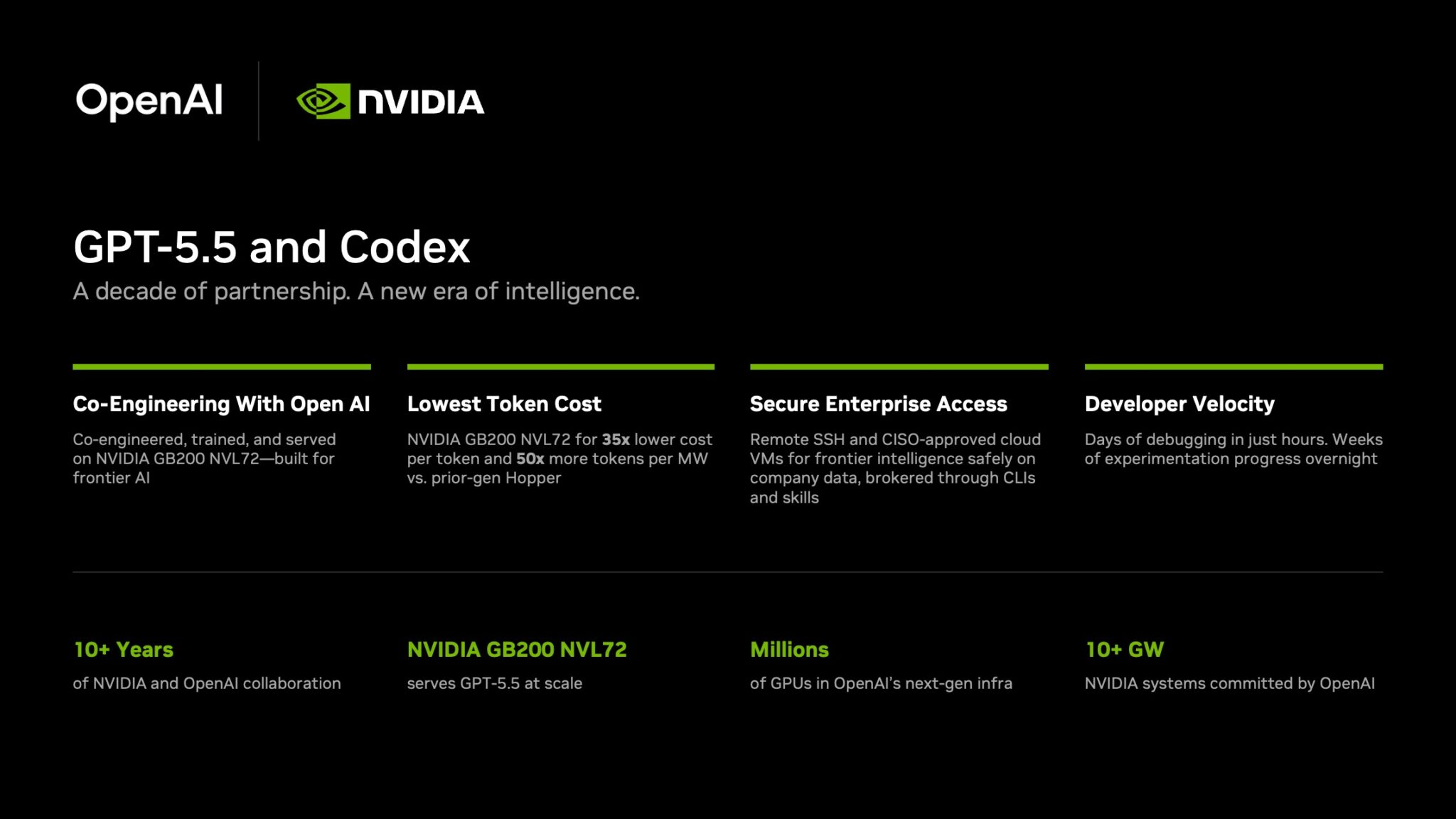

NVIDIA has started rolling out OpenAI’s GPT-5.5 model through the Codex coding agent to more than 10,000 employees, extending the tool well beyond software teams and into core business functions.

The deployment covers engineering, product, legal, marketing, finance, sales, HR, operations and developer programs. Staff are using Codex for coding, internal research and routine knowledge work as companies test whether AI agents can move from demos to daily use.

GPT-5.5 is running on NVIDIA’s GB200 NVL72 rack-scale systems, linking OpenAI’s newest model directly to the chipmaker’s latest infrastructure push. NVIDIA said the systems cut cost per million tokens by 35 times and raise token output per second per megawatt by 50 times versus earlier generations.

Inside the company, it says the effects are immediate. Debugging work that once took days is being finished in hours and experiments across large codebases that used to stretch over weeks are now handled overnight. Teams are also building features from natural-language prompts with fewer failed runs.

In a company-wide note urging staff to adopt the tool, CEO Jensen Huang wrote: “Let’s jump to lightspeed. Welcome to the age of AI.”

Security remains central to the rollout. Codex can connect through Secure Shell to approved cloud virtual machines, allowing agents to work with company data without moving it outside approved environments. NVIDIA said it assigned cloud VMs to employees so agents run in isolated sandboxes with full audit trails.

Also Read: Deezer Says AI Tracks Now Make Up 44% Of Uploads

The company added that the setup uses a zero-data-retention policy. Access to production systems is read-only through command-line tools and internal automation layers.

The move also highlights NVIDIA’s long relationship with OpenAI. NVIDIA said the partnership began in 2016, when Huang personally delivered the first DGX-1 AI supercomputer to OpenAI’s San Francisco office.

The two companies have since worked across hardware and model deployment. NVIDIA also said OpenAI plans to deploy more than 10 gigawatts of NVIDIA systems for future AI infrastructure.

For Gulf markets pouring money into sovereign AI and enterprise automation, the signal is clear: internal AI agents are moving from pilot phase to standard tooling.