News

Truecaller To Use Microsoft Azure AI Speech For Call Answering

The new service features a powerful speech generation tool to allow users to create AI versions of their voices.

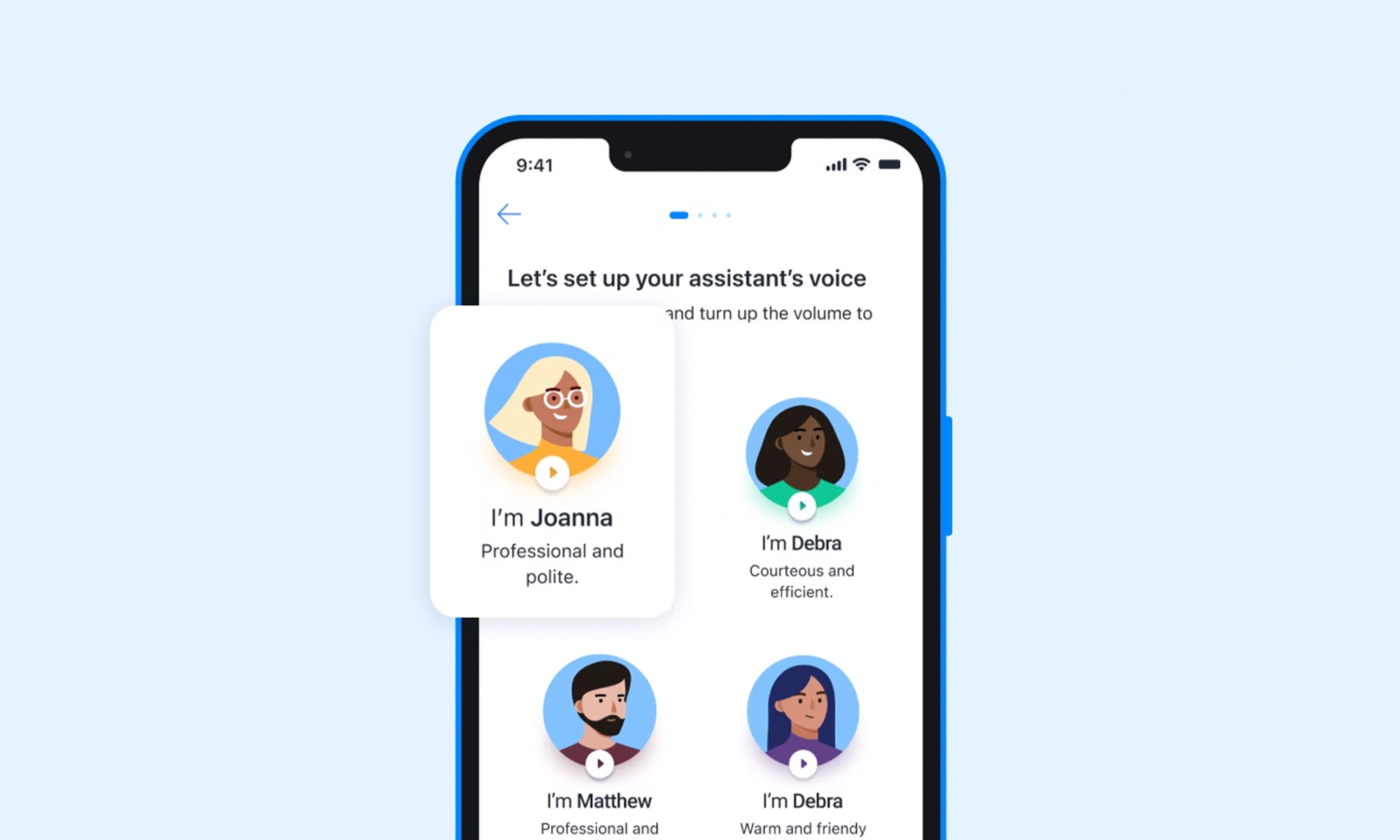

Truecaller, a well-known app for identifying and blocking spam calls, is enhancing its services by allowing users to create AI versions of their voices. The new feature, available to those with access to Truecaller’s AI Assistant, stems from a partnership with Microsoft and its Azure AI Speech tool, allowing the generation of realistic AI voices that accurately mimic users’ speech patterns and tone.

“This groundbreaking capability not only adds a touch of familiarity and comfort for the users but also showcases the power of AI in transforming the way we interact with our digital assistants,” explained Truecaller product director and general manager Raphael Mimoun in a recent blog post.

The AI Assistant in Truecaller screens incoming calls, informing recipients of a caller’s purpose. Based on this information, users can decide whether to answer the call themselves or let the AI Assistant handle it.

When the feature was introduced in 2022, users could only choose from a collection of preset voices. The ability to record one’s own voice represents a significant step towards the complete personalization of the service.

Also Read: Getting Started With Google Gemini: A Beginner’s Guide

Azure AI Speech, showcased during the last Build conference, only recently added a personal voice feature that lets people record and replicate voices. Microsoft explained in a blog post, however, that Personal Voice is available on a limited basis and only for specific use cases like voice assistants.

To maintain ethical standards, Microsoft’s Azure AI Speech automatically adds watermarks to AI-generated voices. Additionally, a code of conduct requires companies to obtain full consent from individuals being recorded and prohibits impersonation.

News

DJI Teases Dual-Camera Osmo Pocket 4P For 2026 Launch

Though most technical claims for the new gimbal come from industry leaks rather than DJI’s own announcement.

DJI has teased a dual-camera version of its Osmo Pocket gimbal, confirming that the Osmo Pocket 4P will launch in 2026. The teaser image is the company’s first preview of the device, following months of speculation about a more advanced model in its pocket camera range.

The image shows a slightly larger device than the existing Osmo Pocket 4, with two camera modules mounted above a compact three-axis gimbal. Reports suggest one camera may use a 1-inch sensor paired with a wide-angle lens, while the second may carry a 3x zoom lens — though DJI has not officially confirmed any of these details.

According to leaks circulating ahead of the launch, the Osmo Pocket 4P could support 4K video at up to 240 frames per second, offer 14 stops of dynamic range and include 10-bit D-Log color support. Those features are commonly used by filmmakers who require greater flexibility during color grading and post-production. Reports also point to Hasselblad color tuning, continuing a partnership that has already appeared in some of DJI’s drone cameras, along with up to 128GB of built-in storage that would reduce reliance on external memory cards during longer shoots.

Also Read: AltoVolo Releases Sigma Footage & Sets Date For Demonstrator

The device is expected to retain features from the existing Osmo Pocket 4, including a three-axis mechanical gimbal, updated ActiveTrack subject tracking and a flip-out touchscreen display. The Osmo Pocket line is aimed at content creators, vloggers, and independent filmmakers seeking compact equipment that can produce usable footage without a larger camera system.

DJI has not provided pricing or a specific launch date beyond the 2026 window. Industry observers expect the Osmo Pocket 4P to cost more than the standard Pocket 4 because of the dual-camera setup and expanded recording capabilities, though no figures have been disclosed. So far, most of the technical detail circulating around the product remains tied to leaks rather than official confirmation.