News

UAE-Based G42 Partners On World’s Fastest AI Supercomputer

The machine, named Condor Galaxy, has been built to assist with generative AI projects and is over 20 times faster than its predecessor.

Condor Galaxy, the world’s “fastest AI training supercomputer”, has been built with assistance from G42, a UAE-based technology holding group. The machine is actually a network of nine interconnected AI supercomputers developed by US-based AI company Cerebras Systems.

Located in Santa Clara, California, the massive machine boasts 4 exaFLOPs of power and a staggering 54 million cores that will significantly reduce AI processing times.

G42 will use Condor Galaxy to train AI models across a variety of data sets and has already created and tested Arabic bilingual chat, healthcare, and climate study applications.

“Collaborating with Cerebras to rapidly deliver the world’s fastest AI training supercomputer and laying the foundation for interconnecting a constellation of these supercomputers across the world has been enormously exciting,” said Talal Alkaissi, CEO of G42 Cloud. “The partnership brings together Cerebras’ extraordinary compute capabilities, together with G42’s multi-industry AI expertise,” he added.

Training the latest AI models requires enormous computing power and specialized programming skills. ChatGPT, for example, relies on 175 billion parameters and uses 10,000 Nvidia GPUs to train its AI algorithms.

Condor Galaxy brings genuine innovation to these kinds of processes, as all computing is performed entirely without complex distributed programming languages. This means that large projects no longer require weeks or even months spent distributing work over thousands of GPUs.

Also Read: Best Web Hosting Providers In The Middle East

“Many cloud companies have announced massive GPU clusters that cost billions of dollars to build but are extremely difficult to use. Distributing a single model over thousands of tiny GPUs takes months from dozens of people with rare expertise,” noted Andrew Feldman, CEO of Cerebras Systems. “CG-1 eliminates this challenge. Setting up a generative AI model takes minutes, not months, and can be done by a single person” he added.

The G42 and Cerebras partnership marks another step toward the democratization of AI. The combination of massive computing power and unique AI data sets should produce groundbreaking results and turbocharge hundreds of AI projects around the world.

News

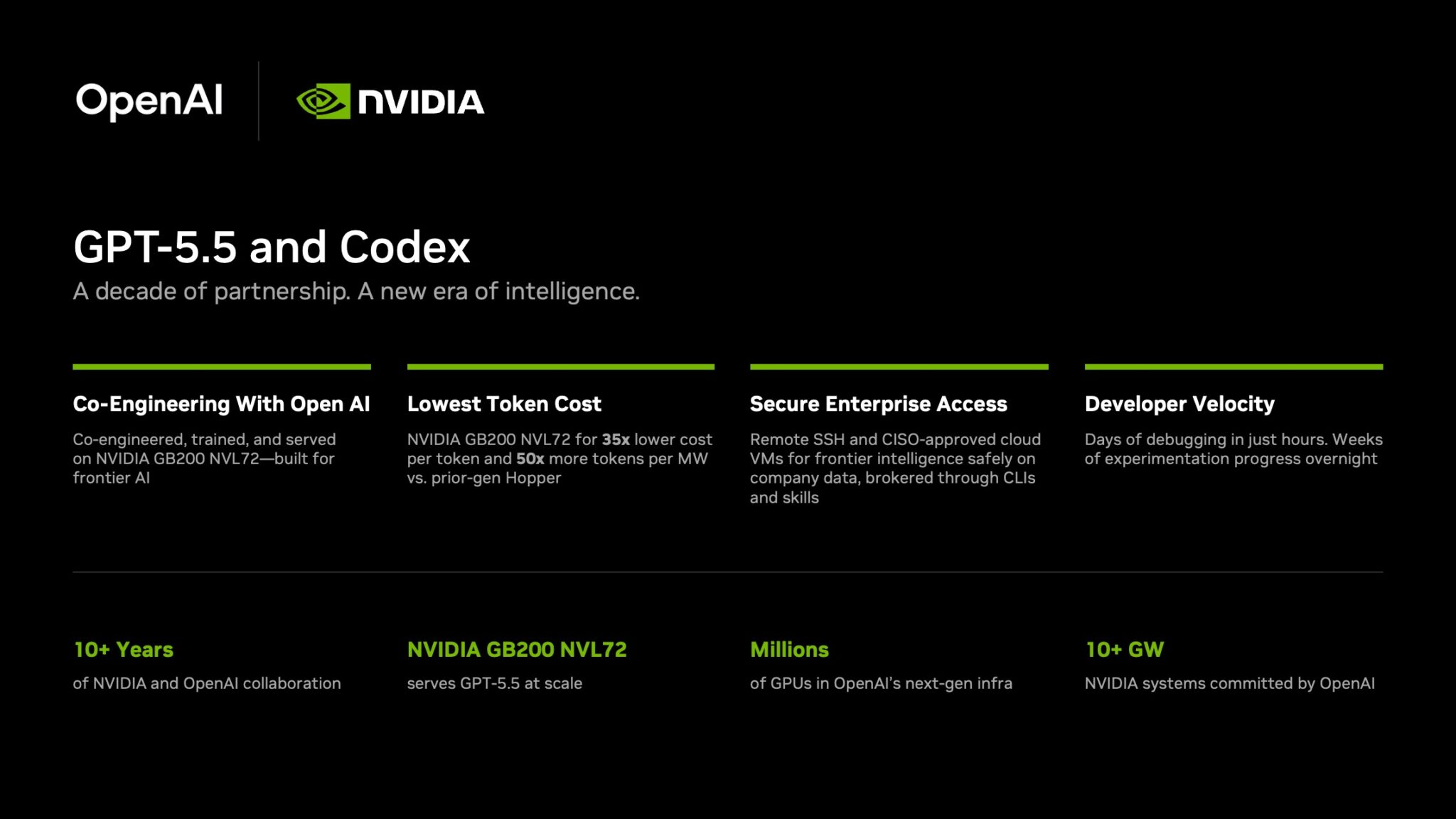

NVIDIA Puts GPT-5.5 Codex In Hands Of 10,000 Staff

The chipmaker has significantly expanded OpenAI’s latest model across teams from engineering to HR under tight internal controls.

NVIDIA has started rolling out OpenAI’s GPT-5.5 model through the Codex coding agent to more than 10,000 employees, extending the tool well beyond software teams and into core business functions.

The deployment covers engineering, product, legal, marketing, finance, sales, HR, operations and developer programs. Staff are using Codex for coding, internal research and routine knowledge work as companies test whether AI agents can move from demos to daily use.

GPT-5.5 is running on NVIDIA’s GB200 NVL72 rack-scale systems, linking OpenAI’s newest model directly to the chipmaker’s latest infrastructure push. NVIDIA said the systems cut cost per million tokens by 35 times and raise token output per second per megawatt by 50 times versus earlier generations.

Inside the company, it says the effects are immediate. Debugging work that once took days is being finished in hours and experiments across large codebases that used to stretch over weeks are now handled overnight. Teams are also building features from natural-language prompts with fewer failed runs.

In a company-wide note urging staff to adopt the tool, CEO Jensen Huang wrote: “Let’s jump to lightspeed. Welcome to the age of AI.”

Security remains central to the rollout. Codex can connect through Secure Shell to approved cloud virtual machines, allowing agents to work with company data without moving it outside approved environments. NVIDIA said it assigned cloud VMs to employees so agents run in isolated sandboxes with full audit trails.

Also Read: Deezer Says AI Tracks Now Make Up 44% Of Uploads

The company added that the setup uses a zero-data-retention policy. Access to production systems is read-only through command-line tools and internal automation layers.

The move also highlights NVIDIA’s long relationship with OpenAI. NVIDIA said the partnership began in 2016, when Huang personally delivered the first DGX-1 AI supercomputer to OpenAI’s San Francisco office.

The two companies have since worked across hardware and model deployment. NVIDIA also said OpenAI plans to deploy more than 10 gigawatts of NVIDIA systems for future AI infrastructure.

For Gulf markets pouring money into sovereign AI and enterprise automation, the signal is clear: internal AI agents are moving from pilot phase to standard tooling.