News

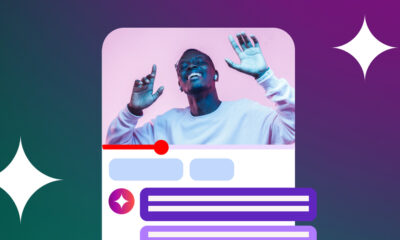

New Artificial Skin For Robots Allows Them To Feel Things

A groundbreaking new development from a Caltech researcher means that robots will soon be able to “feel” their surroundings, with sensations relayed back to human operators.

Caltech assistant professor of medical engineering, Wei Gao, has developed a new platform for robots and their operators known as M-Bot. When it hits the mainstream, the technology will allow humans to control robots more precisely and help protect them in hostile environments.

The platform is based around an artificial skin that effectively gives robots a sense of touch. The newly developed tool also uses machine learning and forearm sensors to allow human users to control robots with their own movements while receiving delicate haptic feedback through their skin.

The synthetic skin is composed of a gelatinous hydrogel and makes robot fingertips function much like our own. Inside the gel, layers of tiny micrometer sensors — applied similarly to Inkjet printing — detect and report touch through very gentle electrical stimulation. For example, if a robotic hand picked up an egg too firmly, the artificial skin sensors would give feedback to the human operator on the sensation of the shell being crushed.

Also Read: Futuristic Electric Self-Driving Trucks Are Coming To The UAE

Wei Gao and his Caltech team hope the system will eventually find applications in everything from agriculture and environmental protection to security. The developers also note that robot operators will be able to “feel” their surroundings, including sensing how much fertilizer or pesticide is being applied to crops or whether suspicious bags contain traces of explosives.

Abdulmotaleb El Saddik, Professor of Computer Vision at Mohamed bin Zayed University of Artificial Intelligence, has noted that the new development offers even more applications and possibilities: “The ability to physically feel the touch, including handshakes and shoulder patting, could contribute to creating a sense of connection and empathy, enhancing the quality of interactions, particularly for the elderly and people living at a distance or those who are in space [such as] astronauts connecting with their family and children”.

News

DJI Teases Dual-Camera Osmo Pocket 4P For 2026 Launch

Though most technical claims for the new gimbal come from industry leaks rather than DJI’s own announcement.

DJI has teased a dual-camera version of its Osmo Pocket gimbal, confirming that the Osmo Pocket 4P will launch in 2026. The teaser image is the company’s first preview of the device, following months of speculation about a more advanced model in its pocket camera range.

The image shows a slightly larger device than the existing Osmo Pocket 4, with two camera modules mounted above a compact three-axis gimbal. Reports suggest one camera may use a 1-inch sensor paired with a wide-angle lens, while the second may carry a 3x zoom lens — though DJI has not officially confirmed any of these details.

According to leaks circulating ahead of the launch, the Osmo Pocket 4P could support 4K video at up to 240 frames per second, offer 14 stops of dynamic range and include 10-bit D-Log color support. Those features are commonly used by filmmakers who require greater flexibility during color grading and post-production. Reports also point to Hasselblad color tuning, continuing a partnership that has already appeared in some of DJI’s drone cameras, along with up to 128GB of built-in storage that would reduce reliance on external memory cards during longer shoots.

Also Read: AltoVolo Releases Sigma Footage & Sets Date For Demonstrator

The device is expected to retain features from the existing Osmo Pocket 4, including a three-axis mechanical gimbal, updated ActiveTrack subject tracking and a flip-out touchscreen display. The Osmo Pocket line is aimed at content creators, vloggers, and independent filmmakers seeking compact equipment that can produce usable footage without a larger camera system.

DJI has not provided pricing or a specific launch date beyond the 2026 window. Industry observers expect the Osmo Pocket 4P to cost more than the standard Pocket 4 because of the dual-camera setup and expanded recording capabilities, though no figures have been disclosed. So far, most of the technical detail circulating around the product remains tied to leaks rather than official confirmation.