News

Everdome Announces First-Ever Metaverse Soundtrack

The interplanetary metaverse project has revealed the opening single from the world’s first metaverse-specific soundtrack.

Created in tandem with music composer Wojciech Urbański, a single called “Machine Phoenix” is being released today which forms part of the first-ever metaverse-specific soundtrack.

The project is called the “Everdome Original Metaverse Soundtrack”. It will give a unique atmosphere and background ambiance to Everdome’s hyper-realistic landscape, which is based on a future Mars settlement, using blockchain and VR technology.

Wojciech Urbański is a gifted composer with a portfolio of award-winning tracks that have been used by the likes of Netflix and Canal+. Wojciech’s Spotify community currently comprises over 600,000 monthly listeners, while overall, his tracks have been played more than 50 million times. The Everdome project hopes to use the composer’s talents to add drama to its hyper-realistic storytelling and visuals.

“Creating a musical illustration of the cosmos and space exploration is probably a dream held by every composer. This collaboration is for me a great artistic opportunity, as the setting of an immersive metaverse world permits the use of a very wide range of sounds and tools,” says Wojciech Urbański.

Metaverse users will hear Wojciech’s work as they launch from Everdome’s virtual Hatta spaceport to the Mars Cycler, situated in Earth’s lower orbit, with the musical collaboration evoking a similar atmosphere to Harry Gregson-Williams’ soundtrack for “The Martian” or Hans Zimmer’s “Dune”.

Also Read: Bedu Has Built A Metaverse Of The UAE’s Planned Mars Trip

As well as partnering with Wojciech Urbański, Everdome is also utilizing the talents of Michał Fojcik, a sound supervisor and designer who has worked on blockbusters including X-Men: Dark Phoenix and Solo. A Star Wars Story.

“Building a sonic background for a metaverse experience is a uniquely challenging task. In the absence of true forms of touch or smell, the visual and the sonic take on huge levels of importance if the experience is to be considered truly hyper-realistic,” says Michał Fojcik, sound supervisor and advisor.

Both artists will be dedicated to the creation of a sonic soundscape that will significantly enhance the quality of Everdome’s storytelling, and you can hear the first single from their collaboration, “Machine Phoenix”, on all major music distribution platforms, including Spotify, Anghami, and YouTube from today.

News

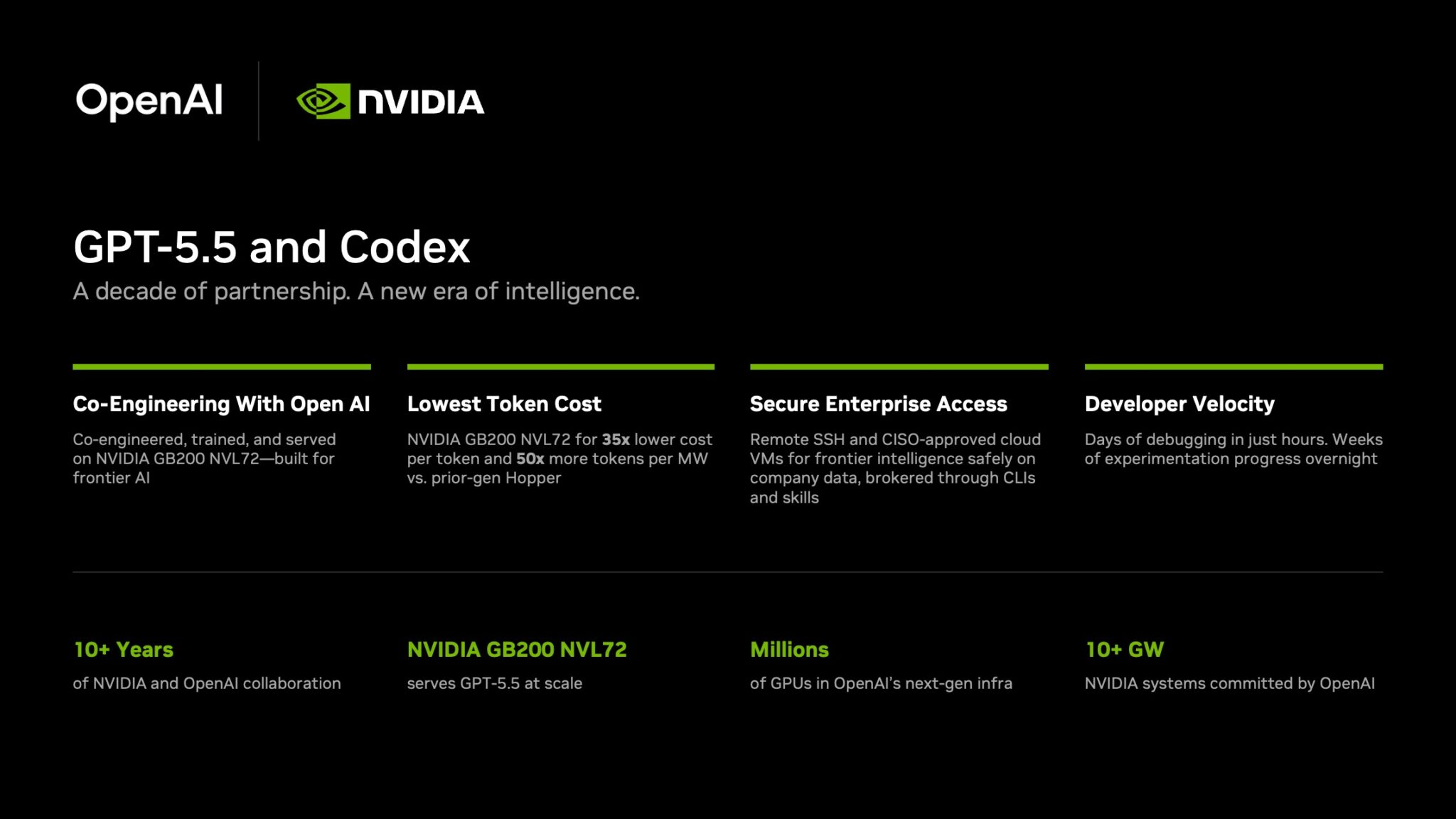

NVIDIA Puts GPT-5.5 Codex In Hands Of 10,000 Staff

The chipmaker has significantly expanded OpenAI’s latest model across teams from engineering to HR under tight internal controls.

NVIDIA has started rolling out OpenAI’s GPT-5.5 model through the Codex coding agent to more than 10,000 employees, extending the tool well beyond software teams and into core business functions.

The deployment covers engineering, product, legal, marketing, finance, sales, HR, operations and developer programs. Staff are using Codex for coding, internal research and routine knowledge work as companies test whether AI agents can move from demos to daily use.

GPT-5.5 is running on NVIDIA’s GB200 NVL72 rack-scale systems, linking OpenAI’s newest model directly to the chipmaker’s latest infrastructure push. NVIDIA said the systems cut cost per million tokens by 35 times and raise token output per second per megawatt by 50 times versus earlier generations.

Inside the company, it says the effects are immediate. Debugging work that once took days is being finished in hours and experiments across large codebases that used to stretch over weeks are now handled overnight. Teams are also building features from natural-language prompts with fewer failed runs.

In a company-wide note urging staff to adopt the tool, CEO Jensen Huang wrote: “Let’s jump to lightspeed. Welcome to the age of AI.”

Security remains central to the rollout. Codex can connect through Secure Shell to approved cloud virtual machines, allowing agents to work with company data without moving it outside approved environments. NVIDIA said it assigned cloud VMs to employees so agents run in isolated sandboxes with full audit trails.

Also Read: Deezer Says AI Tracks Now Make Up 44% Of Uploads

The company added that the setup uses a zero-data-retention policy. Access to production systems is read-only through command-line tools and internal automation layers.

The move also highlights NVIDIA’s long relationship with OpenAI. NVIDIA said the partnership began in 2016, when Huang personally delivered the first DGX-1 AI supercomputer to OpenAI’s San Francisco office.

The two companies have since worked across hardware and model deployment. NVIDIA also said OpenAI plans to deploy more than 10 gigawatts of NVIDIA systems for future AI infrastructure.

For Gulf markets pouring money into sovereign AI and enterprise automation, the signal is clear: internal AI agents are moving from pilot phase to standard tooling.