News

Google Is Developing An AI Cancer-Spotting Microscope

The search giant has teamed up with the US Department of Defense to build the new detection tool.

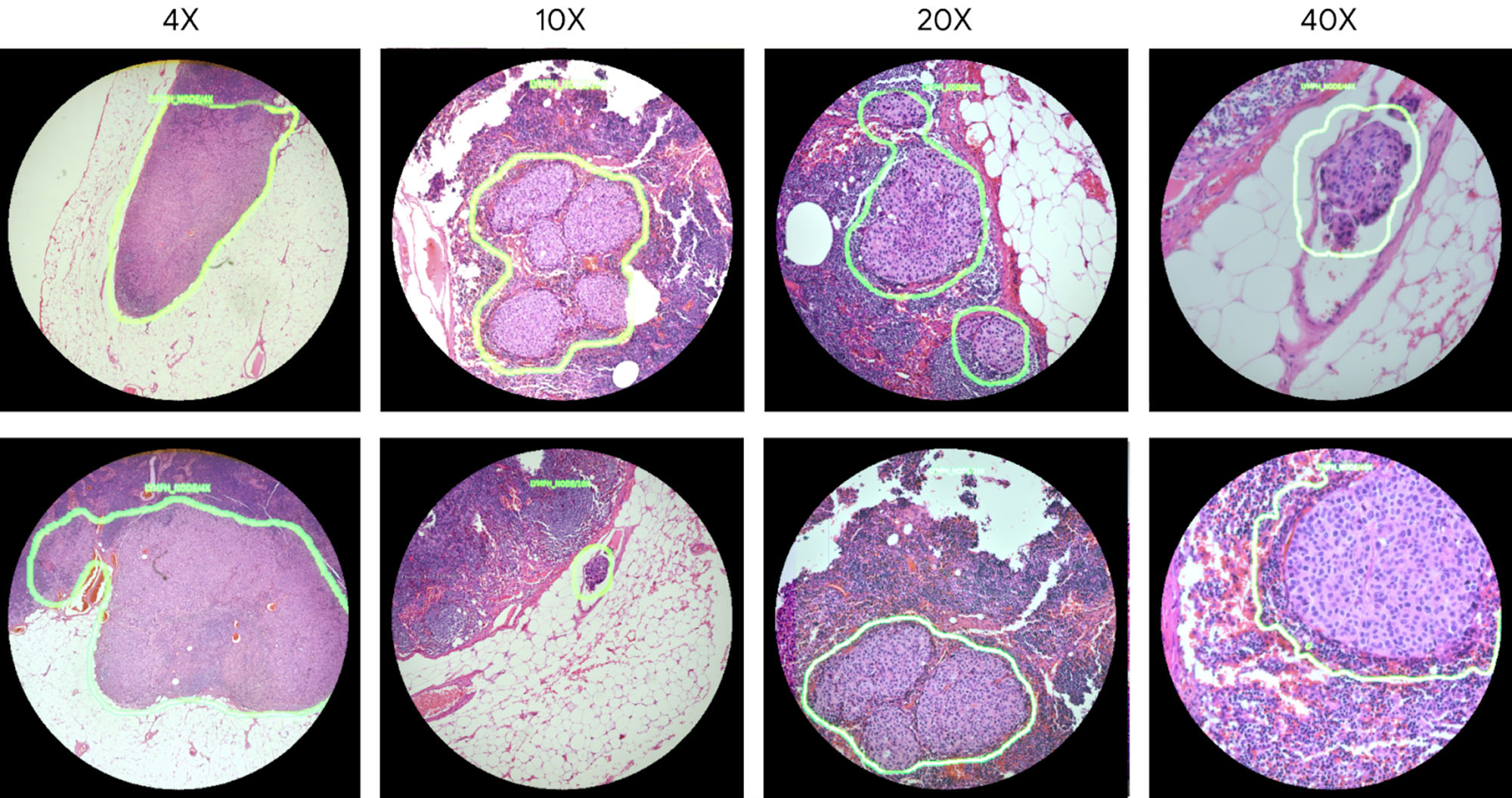

Google has developed an “Augmented Reality Microscope” (ARM) in collaboration with the US Department of Defense. The prototype uses AI enhancements to add real-time visual indicators such as heat maps or object boundaries, making identifying the presence of known pathogens and cancer cells easier.

The ARM was first teased in 2018, and the latest prototype has still not been used to diagnose real patients. After further testing, Google hopes that the technology will be “retrofitted into existing light microscopes in hospitals and clinics”. Once installed, ARM-equipped microscopes will give clinicians a variety of visual feedback cues, including text, arrows, contours, animations and heat maps.

The US Department of Defense’s “Defense Innovation Unit” has already negotiated agreements with Google to enable Augmented Reality Microscope distribution through military channels. ARM is expected to cost $90,000 to $100,000 per unit — a figure well beyond many local health providers.

Also Read: Canadian University Dubai Students Create Smart Garbage Bin

This is not the first time Google Health has invested in AI-powered diagnostic tools. Parent company Alphabet already has a strong record of partnering with startups that invest in AI to “improve healthcare” and is projected to have spent over $200 billion on AI technology over the past decade — something that’s especially noteworthy at a time when the World Health Organization is predicting a worldwide deficit of 15 million health care workers by 2030.

News

Nano Banana 2 Arrives In MENA For Google Gemini Users

Google brings its latest image model to Gemini and Search, adding 4K output and tighter text control for regional users.

Google has opened access to Nano Banana 2 across the Middle East and North Africa, pushing its newest image model into everyday tools rather than keeping it inside the exclusive (and expensive) Pro tier.

The rollout spans the Google Gemini desktop and mobile apps, and extends to Google Search through Lens and AI Mode. Developers can also test it in preview via AI Studio and the Gemini API.

Nano Banana 2 runs on Gemini Flash, Google’s fast inference layer. The focus is speed, but also control. Users can export visuals from 512px up to 4K, adjusting aspect ratios for everything from vertical social posts to widescreen displays.

The model maintains character likeness across up to five figures and preserves fidelity for as many as 14 objects within a single workflow. This enables visual continuity across scenes, iterations, or edits — supporting projects like short films, storyboards, and multi-scene narratives. Text rendering has also been improved, delivering legible typography in mockups and greeting cards, with built-in translation and localization directly within images.

Also Read: RØDE Adds Direct iPhone Pairing To Wireless GO And Pro Mics

Under the hood, the system taps Gemini’s broader knowledge base and pulls in real-time information and imagery from web search to render specific subjects more accurately. Lighting and fine detail have been upgraded, without slowing output.

By embedding the model inside Gemini and Search, Google is normalizing advanced image generation for a mass audience. In MENA, where startups and marketing teams are leaning heavily on AI to scale content across languages and borders, that shift lands at a practical moment.

The move also folds creative tooling deeper into search itself, so that image generation is no longer a separate workflow. It now sits right next to the query box.