News

Samsung Reveals AI Camera Overhaul Ahead Of S26 Launch

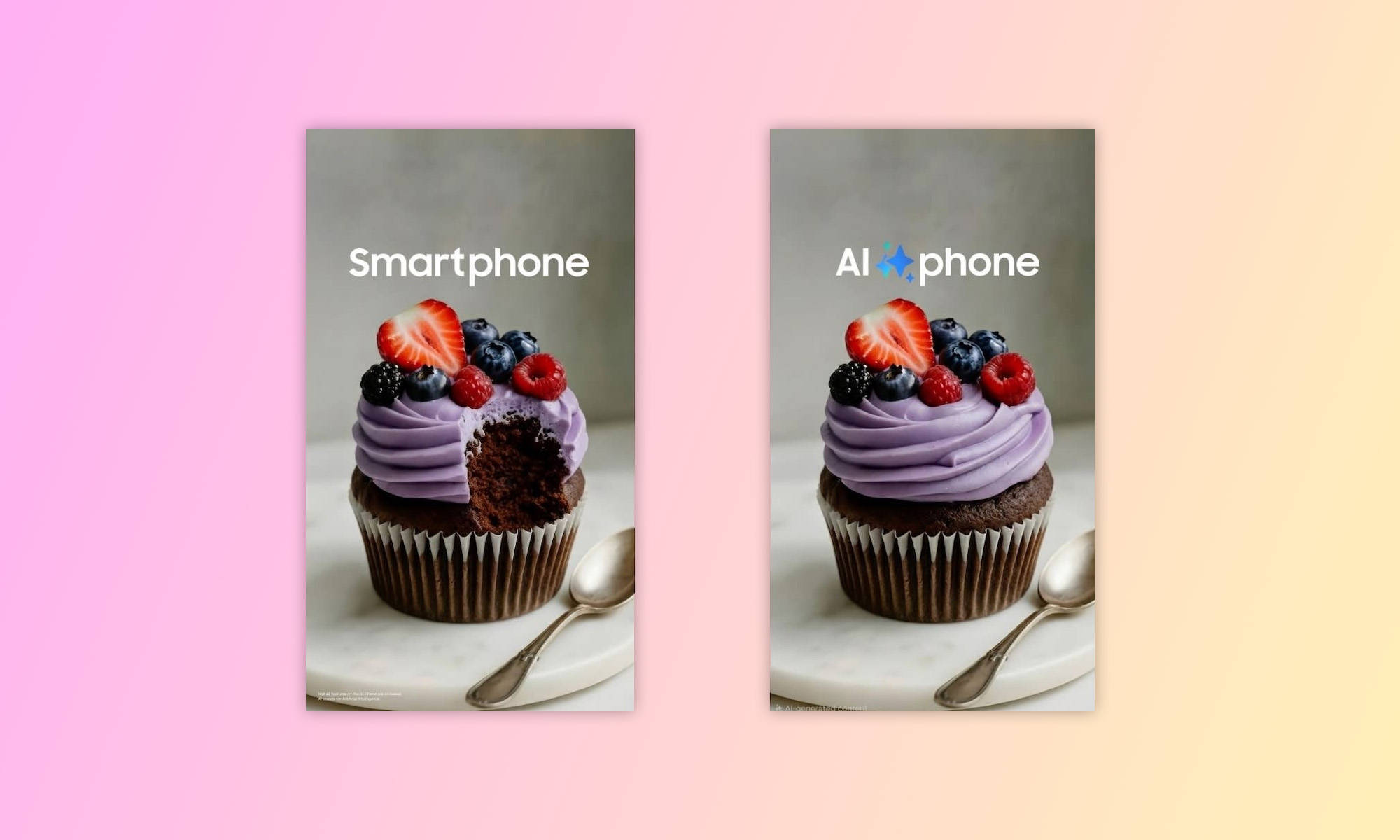

Natural-language photo edits and AI composites will feature in the Galaxy camera app, in preparation for the launch of the Galaxy S26 next week.

Samsung is baking generative AI directly into its Galaxy camera app, folding advanced editing tools into the core shooting interface ahead of next week’s Galaxy S26 reveal at Galaxy Unpacked.

The update from the Korean tech giant centers on natural-language editing. Users will be able to type or speak commands to alter images inside the native camera environment — no exporting, and no third-party apps will be required.

Among the features Samsung previewed was a way to change the time of day in a photo from bright afternoon to night; restoring missing elements, such as filling in a bite taken out of food; and merging subjects from multiple images into one composite frame. The upgrades mean that tasks once requiring desktop software and considerable design skills will soon be available as basic in-camera tools.

Samsung says the system is built on what it calls its “brightest Galaxy camera system ever,” tying the AI layer to upgraded hardware in the S26 line. The company describes the result as a “fluid creative process,” combining capture, editing and sharing in a single workflow.

The timing of Samsung’s announcement is deliberate. Smartphone makers are racing to anchor generative AI in daily use, not as a novelty feature but as basic infrastructure. By embedding editing at the point of capture, Samsung is signaling that the camera app — not a standalone AI tool — is where this shift plays out.

Also Read: RØDE Adds Direct iPhone Pairing To Wireless GO And Pro Mics

For the Middle East, where mobile-first creators are beginning to drive everything from retail to short-form video production, frictionless editing carries a huge speed and tech advantage. As Gulf markets double down on digitalization agendas, devices that compress production time increasingly double as genuine business tools.

Samsung will detail hardware specifications and the full software stack when the Galaxy S26 series breaks cover at Unpacked. The camera, clearly, is where the company wants the AI story to land.

News

Lebanon Ministers Meet Visa Over National Digital Payment Platform

Finance and technology ministers say a comparative study and roadmap will follow before any decision on adopting a model.

Lebanon’s finance and technology ministers met representatives from Visa last week to discuss a proposed unified national digital payment platform for government services, according to a readout from the Ministry of Finance.

The meeting brought together Finance Minister Yassin Jaber, Minister of State for Technology and Artificial Intelligence Kamal Shehadeh, a Visa delegation, and experts from both ministries. Discussion focused on whether Lebanon could establish a single platform through which citizens and institutions would pay taxes, fees, fines and other official transactions electronically, using mobile phones and other digital channels.

The Visa delegation presented examples from countries that have adopted unified government payment platforms, including the United Arab Emirates, Singapore, Estonia and Jordan. According to the readout, the examples were presented as having increased collection rates and expanded financial inclusion.

Talks covered settlement mechanisms, direct transfer to the treasury account, financial reconciliation, risk management, cybersecurity, fees, and an operational model that would involve the private sector. The parties agreed to continue technical and institutional consultations, prepare a comparative study, and develop an implementation roadmap before any decision on adopting a model for Lebanon.

Jaber said the Ministry of Finance had already enabled citizens to pay using credit cards and e-wallets through transfer companies, but described the proposed platform as a further step. He framed the development of electronic payment and collection systems as a priority within the ministry’s modernization plan.

Also Read: Deezer Says AI Tracks Now Make Up 44% Of Uploads

Shehadeh outlined the citizen-facing concept as a single mobile application through which users could settle obligations to ministries, government institutions and other bodies.

“The idea, in short, is that any citizen downloads an application on their mobile phone, through which they can pay all service obligations for all ministries, government institutions, or those owned by the Lebanese state, and others as well, as the platform is not limited only to state institutions,” he said.

Shehadeh added that the platform would not displace banks and money transfer companies that currently provide collection services to the state, calling it complementary to their work.