News

Uber To Add Blade Air Taxi Bookings Through Its App

Joby Aviation will integrate Blade’s air mobility service into the Uber app from 2026, linking airports and cities with quiet, zero-emission electric aircraft.

Electric air taxi firm Joby Aviation and Uber will begin integrating Blade’s urban air mobility services into the Uber app as early as 2026, following Joby’s acquisition of Blade’s passenger business this summer.

Blade carried more than 50,000 passengers in 2024 across New York and Southern Europe, linking high-demand routes such as Newark, JFK, Manhattan and the Hamptons. Once folded into Uber, Blade flights will be bookable alongside ground rides, giving users faster, seamless connections in congested cities.

Joby and Uber have been partners in advanced air mobility since 2019, with Joby acquiring Uber’s Elevate division in 2021. That deal gave Joby tools for market modelling and multimodal integration. The Blade acquisition extends that partnership by adding a ready-made network of landing sites and passenger lounges.

Joby’s electric aircraft, designed to carry four passengers and a pilot at speeds up to 200 mph, promises an acoustic footprint far quieter than helicopters and aims to launch in cities including Dubai, New York, Los Angeles, the UK and Japan.

JoeBen Bevirt, founder and CEO of Joby pitched the integration as both a practical step and a long-term signal: “We’re excited to introduce Uber customers to the magic of seamless urban air travel. Integrating Blade into the Uber app is the natural next step in our global partnership with Uber and will lay the foundation for the introduction of our quiet, zero-emissions aircraft in the years ahead. Together with Uber’s global platform and Blade’s proven network, we’re setting the stage for a new era of air travel worldwide”.

Also Read: KAUST Mathematical Model Tackles 5G Interference With Aircraft

Andrew Macdonald, president and COO of Uber, added: “By harnessing the scale of the Uber platform and partnering with Joby, the industry leader in advanced air mobility, we’re excited to bring our customers the next generation of travel”.

For Uber, adding air mobility is less about novelty than keeping users inside its platform for every leg of a journey.

News

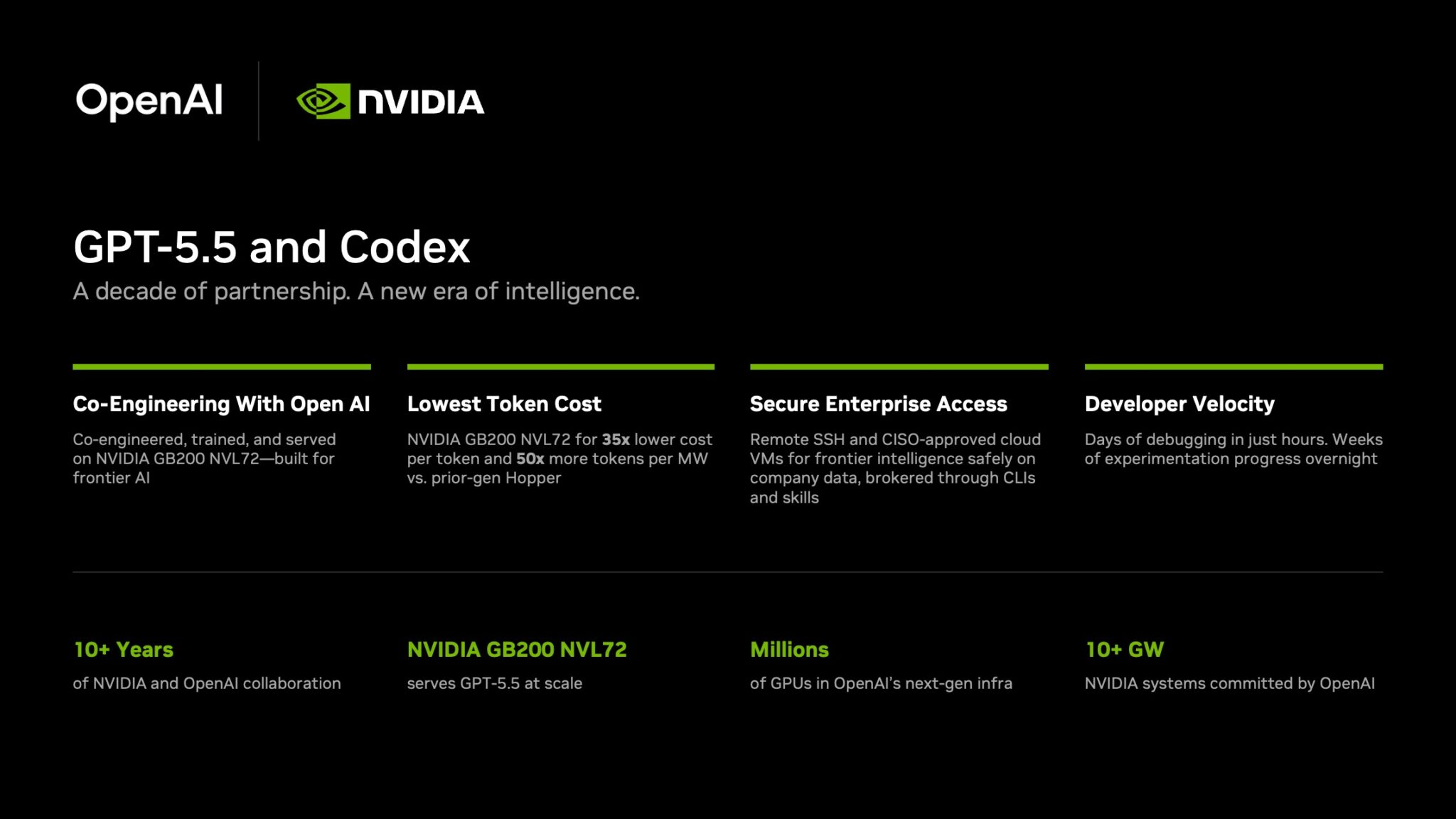

NVIDIA Puts GPT-5.5 Codex In Hands Of 10,000 Staff

The chipmaker has significantly expanded OpenAI’s latest model across teams from engineering to HR under tight internal controls.

NVIDIA has started rolling out OpenAI’s GPT-5.5 model through the Codex coding agent to more than 10,000 employees, extending the tool well beyond software teams and into core business functions.

The deployment covers engineering, product, legal, marketing, finance, sales, HR, operations and developer programs. Staff are using Codex for coding, internal research and routine knowledge work as companies test whether AI agents can move from demos to daily use.

GPT-5.5 is running on NVIDIA’s GB200 NVL72 rack-scale systems, linking OpenAI’s newest model directly to the chipmaker’s latest infrastructure push. NVIDIA said the systems cut cost per million tokens by 35 times and raise token output per second per megawatt by 50 times versus earlier generations.

Inside the company, it says the effects are immediate. Debugging work that once took days is being finished in hours and experiments across large codebases that used to stretch over weeks are now handled overnight. Teams are also building features from natural-language prompts with fewer failed runs.

In a company-wide note urging staff to adopt the tool, CEO Jensen Huang wrote: “Let’s jump to lightspeed. Welcome to the age of AI.”

Security remains central to the rollout. Codex can connect through Secure Shell to approved cloud virtual machines, allowing agents to work with company data without moving it outside approved environments. NVIDIA said it assigned cloud VMs to employees so agents run in isolated sandboxes with full audit trails.

Also Read: Deezer Says AI Tracks Now Make Up 44% Of Uploads

The company added that the setup uses a zero-data-retention policy. Access to production systems is read-only through command-line tools and internal automation layers.

The move also highlights NVIDIA’s long relationship with OpenAI. NVIDIA said the partnership began in 2016, when Huang personally delivered the first DGX-1 AI supercomputer to OpenAI’s San Francisco office.

The two companies have since worked across hardware and model deployment. NVIDIA also said OpenAI plans to deploy more than 10 gigawatts of NVIDIA systems for future AI infrastructure.

For Gulf markets pouring money into sovereign AI and enterprise automation, the signal is clear: internal AI agents are moving from pilot phase to standard tooling.