News

Hub71 To Invest $2 Billion In New Web3 Startup Ecosystem

Hub71+ Digital Assets will give startups access to venture capital companies and technology providers in Abu Dhabi and beyond.

Abu Dhabi global technology accelerator Hub71 is seeking to disrupt the Web3 space with the announcement of a new ecosystem focused on startup funding and blockchain technologies.

Over $2 billion has already been committed in capital, enabling the new Hub71+ Digital Assets ecosystem to offer Web3 startups access to venture capital companies, customers, tech providers, blockchain platforms, and much more.

Hub71’s anchor partner is First Abu Dhabi Bank (FAB), and its research and innovation center, known as FABRIC, will, in turn, help FAB to leverage its financial services in the metaverse. Further corporate, government, and investment partners will support the project’s growth across the UAE and beyond.

Also Read: A First Glimpse Of Dubai’s Air Taxis Flying Past Local Landmarks

“Hub71+ Digital Assets signifies that Abu Dhabi is open to disruptive businesses driving forward change and transformation on a global level. Teaming up with ADGM, FAB and its research and innovation center, FABRIC, alongside the world’s leading Web3 companies and enablers under one roof, will provide founders with an opportunity to fundraise, develop and commercialize innovations safely while operating within the largest regulated jurisdiction of virtual assets in the MENA region,” says Ahmad Ali Alwan, Deputy CEO of Hub71.

The new Hub71 alliance will help startups benefit from ADGM’s diverse ecosystem and efficient regulatory environment, which will help develop the UAE economy to keep pace with global trends.

News

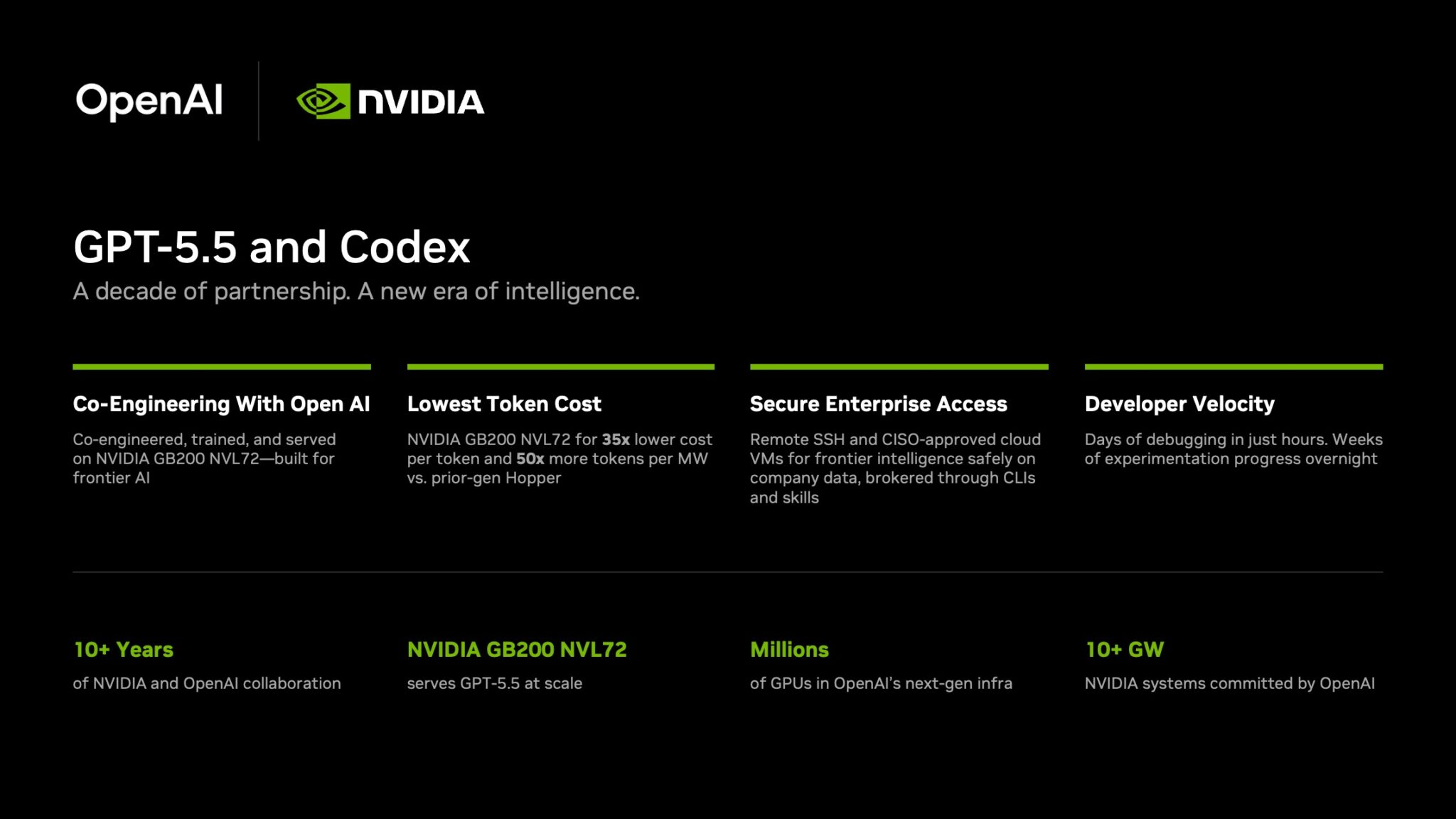

NVIDIA Puts GPT-5.5 Codex In Hands Of 10,000 Staff

The chipmaker has significantly expanded OpenAI’s latest model across teams from engineering to HR under tight internal controls.

NVIDIA has started rolling out OpenAI’s GPT-5.5 model through the Codex coding agent to more than 10,000 employees, extending the tool well beyond software teams and into core business functions.

The deployment covers engineering, product, legal, marketing, finance, sales, HR, operations and developer programs. Staff are using Codex for coding, internal research and routine knowledge work as companies test whether AI agents can move from demos to daily use.

GPT-5.5 is running on NVIDIA’s GB200 NVL72 rack-scale systems, linking OpenAI’s newest model directly to the chipmaker’s latest infrastructure push. NVIDIA said the systems cut cost per million tokens by 35 times and raise token output per second per megawatt by 50 times versus earlier generations.

Inside the company, it says the effects are immediate. Debugging work that once took days is being finished in hours and experiments across large codebases that used to stretch over weeks are now handled overnight. Teams are also building features from natural-language prompts with fewer failed runs.

In a company-wide note urging staff to adopt the tool, CEO Jensen Huang wrote: “Let’s jump to lightspeed. Welcome to the age of AI.”

Security remains central to the rollout. Codex can connect through Secure Shell to approved cloud virtual machines, allowing agents to work with company data without moving it outside approved environments. NVIDIA said it assigned cloud VMs to employees so agents run in isolated sandboxes with full audit trails.

Also Read: Deezer Says AI Tracks Now Make Up 44% Of Uploads

The company added that the setup uses a zero-data-retention policy. Access to production systems is read-only through command-line tools and internal automation layers.

The move also highlights NVIDIA’s long relationship with OpenAI. NVIDIA said the partnership began in 2016, when Huang personally delivered the first DGX-1 AI supercomputer to OpenAI’s San Francisco office.

The two companies have since worked across hardware and model deployment. NVIDIA also said OpenAI plans to deploy more than 10 gigawatts of NVIDIA systems for future AI infrastructure.

For Gulf markets pouring money into sovereign AI and enterprise automation, the signal is clear: internal AI agents are moving from pilot phase to standard tooling.