News

Intel Announces Its Range Of 13th Gen Core Processors

Intel’s 13th Gen processors have landed, offering support for DDR5 and DDR4 memory, with the same LGA1700 sockets used by the outgoing generation.

At the Intel Innovation event on September 27th, the processor giant revealed its latest 13th Generation Core processor family. The chips are powered by Intel’s performance hybrid architecture and come in six new unlocked variants for desktop applications.

The new lineup is headed by the monstrous Core i9-13900K and Core i9-13900KF, which utilize 24 cores for a total of 20 threads. The “E” cores sport a base frequency of 2.2Ghz, while the “P” cores max out at 3.0Ghz — though the turbo frequency can push those numbers to 4.3Ghz and 5.8Ghz, respectively. In terms of power draw, both top-of-the-line processors are rated at 125W, with max power topping out at 253W.

The range is codenamed “Raptor Lake“, and Intel claims that the entire family of processors will offer users up to a 15% increase in single-threaded performance and up to a 41% increase in multi-threaded performance compared to the outgoing 12th generation.

Speaking of the rest of the Raptor Lake family, two i7 and two i5 processors will also feature in the lineup, with K and KF variants of each, with the latter not supporting integrated graphics.

Also Read: Belgian Artist Creates Instagram Surveillance Tool

There’s also some good news for system builders and serial upgraders: Although limited to a max speed of 5600Mhz, DDR4 RAM will still be supported by the new processors, which also use the same LGA1700 socket configuration — meaning Z690 and Z790 motherboard owners can sample the power of Raptor Lake without the need for a complete system refresh.

As for laptop users, Intel has confirmed that the new 13th Gen processors will be finding their way into portable PCs in the near future, sticking to the familiar naming convention of U, P, H, and HX. The new 13th Gen Raptor Lake range will hit stores from October 20th, though there’s no word on availability levels as yet.

News

NVIDIA Puts GPT-5.5 Codex In Hands Of 10,000 Staff

The chipmaker has significantly expanded OpenAI’s latest model across teams from engineering to HR under tight internal controls.

NVIDIA has started rolling out OpenAI’s GPT-5.5 model through the Codex coding agent to more than 10,000 employees, extending the tool well beyond software teams and into core business functions.

The deployment covers engineering, product, legal, marketing, finance, sales, HR, operations and developer programs. Staff are using Codex for coding, internal research and routine knowledge work as companies test whether AI agents can move from demos to daily use.

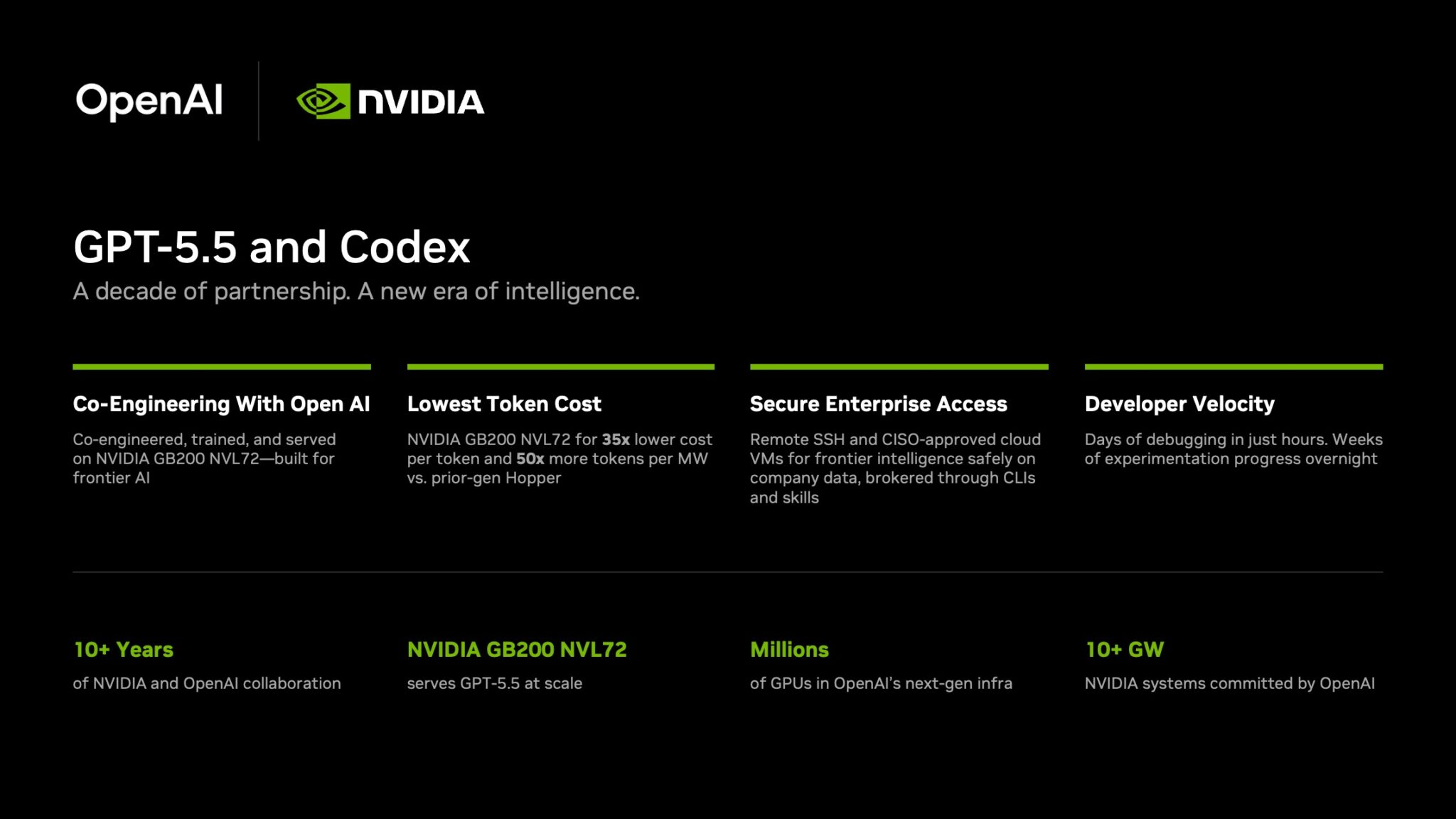

GPT-5.5 is running on NVIDIA’s GB200 NVL72 rack-scale systems, linking OpenAI’s newest model directly to the chipmaker’s latest infrastructure push. NVIDIA said the systems cut cost per million tokens by 35 times and raise token output per second per megawatt by 50 times versus earlier generations.

Inside the company, it says the effects are immediate. Debugging work that once took days is being finished in hours and experiments across large codebases that used to stretch over weeks are now handled overnight. Teams are also building features from natural-language prompts with fewer failed runs.

In a company-wide note urging staff to adopt the tool, CEO Jensen Huang wrote: “Let’s jump to lightspeed. Welcome to the age of AI.”

Security remains central to the rollout. Codex can connect through Secure Shell to approved cloud virtual machines, allowing agents to work with company data without moving it outside approved environments. NVIDIA said it assigned cloud VMs to employees so agents run in isolated sandboxes with full audit trails.

Also Read: Deezer Says AI Tracks Now Make Up 44% Of Uploads

The company added that the setup uses a zero-data-retention policy. Access to production systems is read-only through command-line tools and internal automation layers.

The move also highlights NVIDIA’s long relationship with OpenAI. NVIDIA said the partnership began in 2016, when Huang personally delivered the first DGX-1 AI supercomputer to OpenAI’s San Francisco office.

The two companies have since worked across hardware and model deployment. NVIDIA also said OpenAI plans to deploy more than 10 gigawatts of NVIDIA systems for future AI infrastructure.

For Gulf markets pouring money into sovereign AI and enterprise automation, the signal is clear: internal AI agents are moving from pilot phase to standard tooling.