News

AI-Powered App Can Tell You How Your Cat Is Feeling

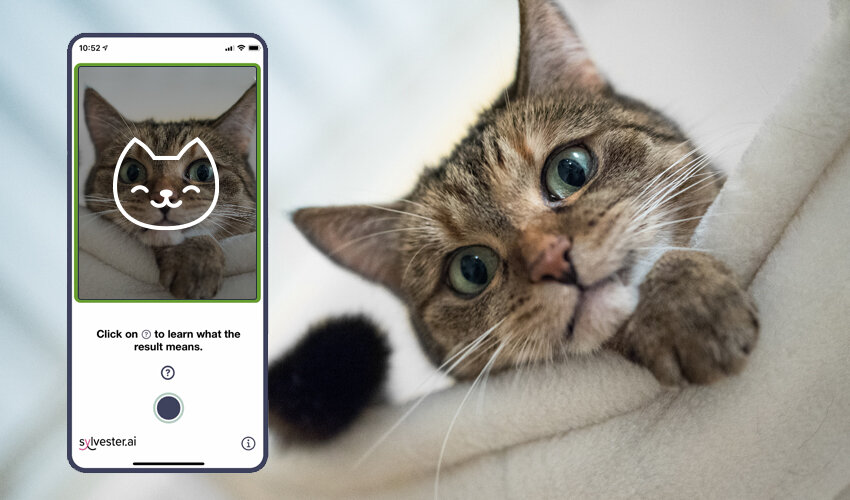

The app can reach an accuracy of up to 97% when provided a high-quality and full-face front image of the cat.

Are you sometimes unsure whether your cat is tired or plotting your assassination? You’re not alone because cats don’t show their emotions too well.

That’s why scientists came up with something called the Feline Grimace Scale, a method of assessing the occurrence or severity of pain experienced by cats according to objective scoring of facial expressions. Now, an Alberta-based animal health technology company called Sylvester.ai has paired the Feline Grimace Scale with an artificial intelligence algorithm to create an app that can tell you how your cat is feeling.

The app is called Tably, and you can download it directly from the App Store. To use it, you simply need to point your smartphone’s camera at your furry friend and wait for a short while for the app to analyze a variety of facial features, including eye-narrowing, muzzle tension, and how whiskers change, to determine how your cat is feeling.

According to Michelle Priest, Tably senior product manager, the app can reach an accuracy of up to 97 percent when provided a high-quality and full-face front image of the cat. That’s good enough not only for concerned cat owners but also for young veterinarians, who may not have the experience necessary to tell whether a cat is feeling pain.

Also Read: Microsoft Is Resurrecting Clippy With Its New 3D Emoji

The AI algorithm behind Tably was trained at the Wild Rose Cat Clinic of Calgary. “I love working with cats, have always grown up with cats,” said Dr. Liz Ruelle, DVM, DABVP Feline Specialist at the clinic. “For other colleagues, new grads, who maybe have not had quite so much experience, it can be very daunting to know — is your patient painful?”.

Tably is an excellent example of cutting-edge technology being used to positively impact the lives of those who don’t understand it themselves (although you never know with cats).

News

Nano Banana 2 Arrives In MENA For Google Gemini Users

Google brings its latest image model to Gemini and Search, adding 4K output and tighter text control for regional users.

Google has opened access to Nano Banana 2 across the Middle East and North Africa, pushing its newest image model into everyday tools rather than keeping it inside the exclusive (and expensive) Pro tier.

The rollout spans the Google Gemini desktop and mobile apps, and extends to Google Search through Lens and AI Mode. Developers can also test it in preview via AI Studio and the Gemini API.

Nano Banana 2 runs on Gemini Flash, Google’s fast inference layer. The focus is speed, but also control. Users can export visuals from 512px up to 4K, adjusting aspect ratios for everything from vertical social posts to widescreen displays.

The model maintains character likeness across up to five figures and preserves fidelity for as many as 14 objects within a single workflow. This enables visual continuity across scenes, iterations, or edits — supporting projects like short films, storyboards, and multi-scene narratives. Text rendering has also been improved, delivering legible typography in mockups and greeting cards, with built-in translation and localization directly within images.

Also Read: RØDE Adds Direct iPhone Pairing To Wireless GO And Pro Mics

Under the hood, the system taps Gemini’s broader knowledge base and pulls in real-time information and imagery from web search to render specific subjects more accurately. Lighting and fine detail have been upgraded, without slowing output.

By embedding the model inside Gemini and Search, Google is normalizing advanced image generation for a mass audience. In MENA, where startups and marketing teams are leaning heavily on AI to scale content across languages and borders, that shift lands at a practical moment.

The move also folds creative tooling deeper into search itself, so that image generation is no longer a separate workflow. It now sits right next to the query box.