News

Japan Sets New Record For Data Transmission Speed

The researchers have absolutely smashed their own previous achievement by transmitting data at a jaw-dropping 1.02 petabits per second.

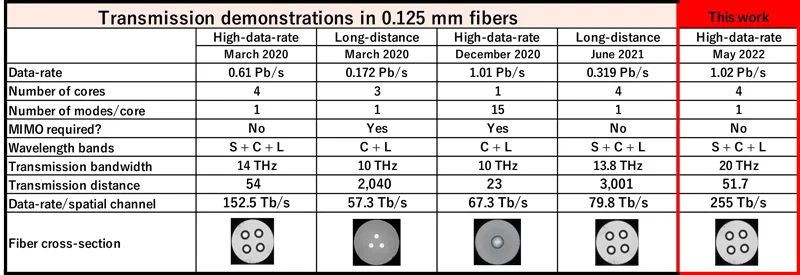

A team of researchers from the National Institute of Information and Communications Technology (NICT) in Japan is at it again. After achieving a data transmission speed of 319 terabits per second (Tb/s) last year, the researchers have now absolutely smashed their own previous achievement by transmitting data at 1.02 petabits per second (Pb/s).

Since 1 petabits is 125,000 gigabytes, it means that the team could theoretically transmit more than 31,000 movies in 4K resolution every single second. To make the record even more impressive, it’s important to highlight that it was achieved using fiber-optic cables with four cores, which is exactly how many cores were used to set the previous record.

“NICT constructed the transmission system using 4-core MCF with standard 0.125 mm cladding diameter, WDM technology and mixed optical amplification systems. The system allowed a data transmission speed of 1.02 petabit per second over 51.7 km,” explained the researchers in the official press release.

The mind-blowing record was first presented in May at the International Conference on Laser and Electro-Optics (CLEO) 2022 in San Jose, California, one of the largest international conferences related to optical devices and systems.

Also Read: Microsoft Blocks Lebanon-Based Hackers Targeting Israel

Moving forward, the NICT team wants to continue exploring different ways to transmit data faster across fiber optic cables. Their main focus is on low-core-count multi-core fibers with standard cladding diameter because such cables are comparable to standard single-mode fibers and thus more attractive for early adoption.

With dozens of countries around the world actively moving from 4G to 5G broadband cellular networks, the massive amount of data being sent and received is guaranteed to continue increasing at a rapid pace. Research projects such as the one behind the latest record can pave the way for new fibers capable of meeting the growing demand and supporting new bandwidth-hungry services.

News

NVIDIA Puts GPT-5.5 Codex In Hands Of 10,000 Staff

The chipmaker has significantly expanded OpenAI’s latest model across teams from engineering to HR under tight internal controls.

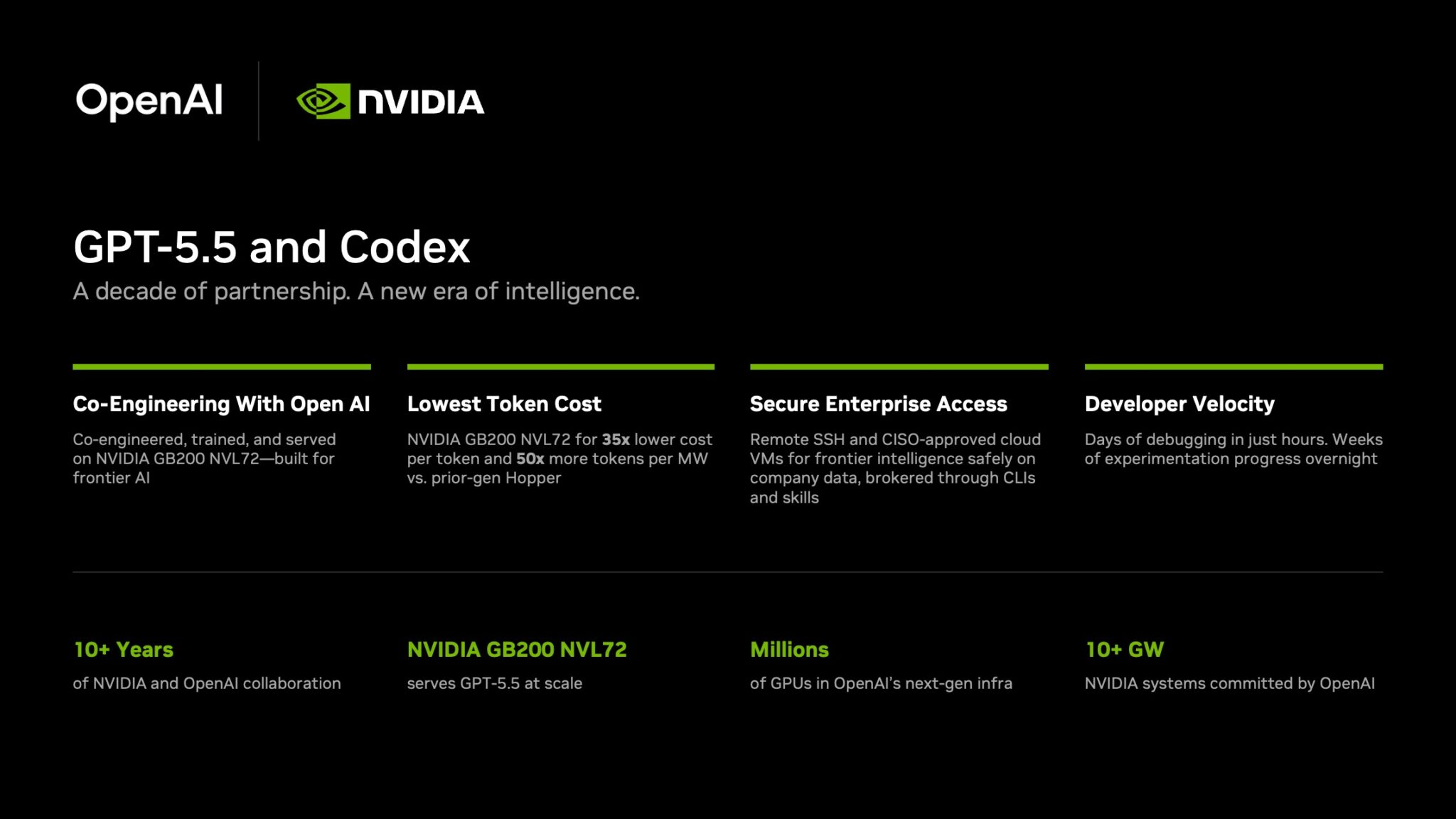

NVIDIA has started rolling out OpenAI’s GPT-5.5 model through the Codex coding agent to more than 10,000 employees, extending the tool well beyond software teams and into core business functions.

The deployment covers engineering, product, legal, marketing, finance, sales, HR, operations and developer programs. Staff are using Codex for coding, internal research and routine knowledge work as companies test whether AI agents can move from demos to daily use.

GPT-5.5 is running on NVIDIA’s GB200 NVL72 rack-scale systems, linking OpenAI’s newest model directly to the chipmaker’s latest infrastructure push. NVIDIA said the systems cut cost per million tokens by 35 times and raise token output per second per megawatt by 50 times versus earlier generations.

Inside the company, it says the effects are immediate. Debugging work that once took days is being finished in hours and experiments across large codebases that used to stretch over weeks are now handled overnight. Teams are also building features from natural-language prompts with fewer failed runs.

In a company-wide note urging staff to adopt the tool, CEO Jensen Huang wrote: “Let’s jump to lightspeed. Welcome to the age of AI.”

Security remains central to the rollout. Codex can connect through Secure Shell to approved cloud virtual machines, allowing agents to work with company data without moving it outside approved environments. NVIDIA said it assigned cloud VMs to employees so agents run in isolated sandboxes with full audit trails.

Also Read: Deezer Says AI Tracks Now Make Up 44% Of Uploads

The company added that the setup uses a zero-data-retention policy. Access to production systems is read-only through command-line tools and internal automation layers.

The move also highlights NVIDIA’s long relationship with OpenAI. NVIDIA said the partnership began in 2016, when Huang personally delivered the first DGX-1 AI supercomputer to OpenAI’s San Francisco office.

The two companies have since worked across hardware and model deployment. NVIDIA also said OpenAI plans to deploy more than 10 gigawatts of NVIDIA systems for future AI infrastructure.

For Gulf markets pouring money into sovereign AI and enterprise automation, the signal is clear: internal AI agents are moving from pilot phase to standard tooling.